Announcing Next Generation Pulumi Azure Provider

We are excited to announce the beta release of a next generation Microsoft Azure provider for Pulumi. Azure has been a rapidly growing cloud platform among Pulumi users over the last year, and with the next generation Azure provider, we are doubling down on providing the best support possible for the Azure platform in Pulumi. We designed the new provider to expose the entire API surface of Azure to developers and operators, now and forever.

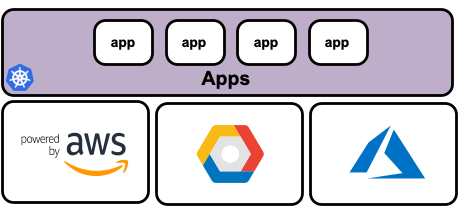

The new Azure provider for Pulumi (azure-nextgen) works directly with the Azure Resource Manager (ARM) platform instead of depending on a handwritten layer as with the previous provider. This approach ensures higher quality and higher fidelity with the Azure platform.