Introducing pulumi do: Direct Resource Operations for Any Cloud

Infrastructure as code is the right model for production systems. State tracking, drift detection, and repeatable deployments all matter when you’re managing real workloads.

But sometimes, you also need a quick, one-off interaction with the cloud: create a bucket or a database, look up a VPC, delete a stray resource.

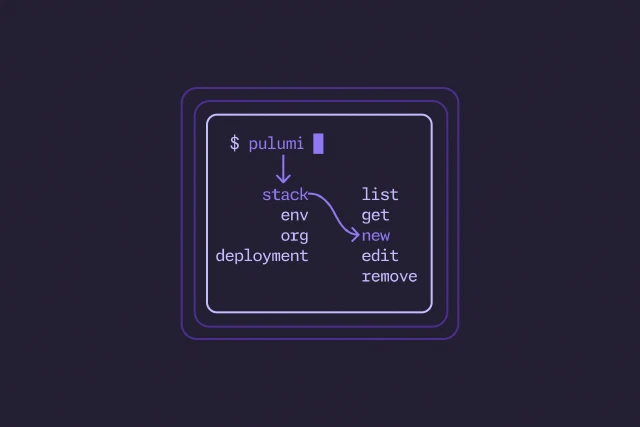

Today we’re introducing pulumi do, a new command for direct resource operations. With pulumi do, you can create, read, update, delete, and query any cloud resource from the terminal with a single command, across thousands of Pulumi-supported providers — no project, code, or state required.